No Free Lunch

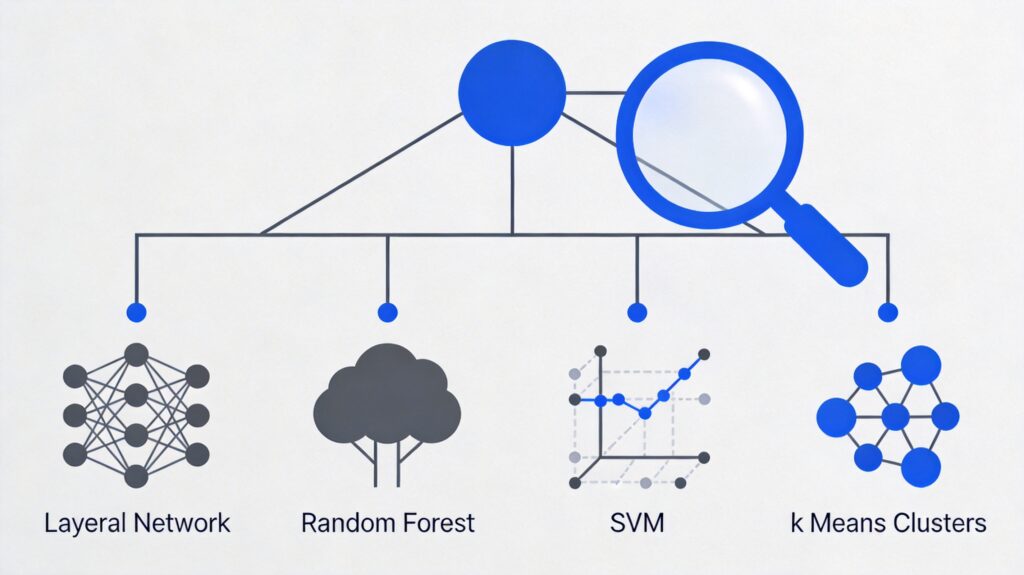

A fundamental theorem in machine learning is the "No Free Lunch" theorem. This means no single algorithm can perfectly solve all problems, especially in supervised learning (e.g., predictive modeling). Algorithm selection depends on dataset size, structure, and the specific problem.

Primary Machine Learning Tasks

We will first discuss the three most common machine learning tasks: Regression, Classification, and Clustering, along with two key dimensionality reduction tasks: Feature Selection and Feature Extraction.

1. Regression

Regression is used to predict continuous numerical variables, such as house prices, stock values, or student grades. Its defining characteristic is training data with numerical labels.

1.1 (Regularized) Linear Regression

Linear regression is the most common algorithm for regression tasks, fitting data with a hyperplane (e.g., a straight line). Regularized forms (LASSO, Ridge, Elastic Net) help prevent overfitting.

Pros: Easy to understand and interpret; can prevent overfitting via regularization; models are easy to update.

Cons: Struggles with non-linear relationships; not flexible enough to identify complex patterns.

1.2 Regression Trees (Ensemble Methods)

Regression trees (decision trees) learn non-linear relationships by repeatedly splitting data. Ensemble methods (e.g., Random Forest, Gradient Boosted Trees) combine predictions from multiple trees and often perform excellently.

Pros: Can learn non-linear relationships; robust to outliers; ensemble methods often win in practice.

Cons: A single tree is prone to overfitting, but ensemble methods mitigate this.

1.3 Deep Learning

Deep learning uses multi-layer neural networks to learn extremely complex patterns. It excels in domains like images, audio, and text.

Pros: State-of-the-art in specific domains (e.g., computer vision); can automatically learn features, reducing reliance on feature engineering.

Cons: Requires large amounts of training data; computationally intensive; complex hyperparameter tuning; may not outperform ensemble methods on classic problems.

1.4 k-Nearest Neighbors (Honorable Mention)

k-Nearest Neighbors is an instance-based algorithm that predicts by finding the most similar training examples. It is memory-intensive and performs poorly with high-dimensional data. In practice, regression or tree ensembles are usually preferred.

2. Classification

Classification is used to predict categorical variables, such as employee churn, email filtering, or financial fraud. Many regression algorithms have classification counterparts.

2.1 (Regularized) Logistic Regression

Logistic regression is the classification version of linear regression, mapping predictions to a 0-1 interval as probabilities via a logistic function.

Pros: Output has a probabilistic interpretation; can avoid overfitting via regularization; models are easy to update.

Cons: Struggles with multi-class or non-linear decision boundaries.

2.2 Classification Trees (Ensemble Methods)

Classification trees (CART) are similar to regression trees. Ensemble methods (Random Forest, Gradient Boosted Trees) also perform excellently.

Pros: Robust to anomalous data; scalable; can naturally model non-linear decision boundaries.

Cons: A single tree is prone to overfitting, but ensemble methods mitigate this.

2.3 Deep Learning

Deep learning is also applicable to classification tasks, especially on image, audio, and text data.

Pros: Excels with specific data types.

Cons: Requires large amounts of data; not a general-purpose algorithm.

2.4 Support Vector Machines

Support Vector Machines use the kernel trick to transform non-linear problems into linear ones, finding a decision boundary that maximizes the margin.

Pros: Can model non-linear decision boundaries; multiple kernel functions available; robust to overfitting.

Cons: Memory-intensive; kernel selection requires skill; not suitable for large datasets; Random Forest is often superior in practice.

2.5 Naive Bayes

Naive Bayes is based on conditional probability and counting, assuming features are independent.

Pros: Simple to implement; easy to update with new data; often performs well in practice.

Cons: The independence assumption is usually false; often superseded by more complex algorithms.

3. Clustering

Clustering is an unsupervised learning task used to discover natural groups (clusters) within data, such as user personas or item grouping.

3.1 K-Means

K-Means clusters data based on geometric distance, tending to produce spherical clusters.

Pros: Fast, simple, and flexible; good for beginners.

Cons: Requires pre-specifying K (number of clusters); performs poorly on non-spherical clusters.

3.2 Affinity Propagation

Affinity Propagation determines clusters based on graphical distances, tending to produce smaller, unevenly sized clusters.

Pros: Does not require specifying the number of clusters.

Cons: Slow to train; high memory consumption; difficult to scale; also assumes spherical clusters.

3.3 Hierarchical Clustering

Hierarchical clustering starts with each point as its own cluster and progressively merges them, forming a hierarchy.

Pros: Does not assume spherical clusters; easy to scale to large datasets.

Cons: Still requires choosing the final number of clusters (cutting the hierarchy).

3.4 DBSCAN

DBSCAN performs density-based clustering, grouping dense regions into clusters. HDBSCAN allows for variable density.

Pros: Does not assume spherical clusters; scalable; can handle noise points.

Cons: Requires tuning hyperparameters that define density; sensitive to these settings.

The Curse of Dimensionality & Dimensionality Reduction

When the number of features far exceeds the number of samples, model training becomes difficult—this is the "Curse of Dimensionality." Dimensionality reduction methods include Feature Selection and Feature Extraction.

4. Feature Selection

Feature Selection involves selecting a subset from the original feature set, removing irrelevant or redundant features.

4.1 Variance Threshold

Removes features with variance below a threshold (features with little variation).

Pros: Intuitively sound; a relatively safe form of dimensionality reduction.

Cons: Ineffective if the problem doesn't require reduction; requires manual threshold setting.

4.2 Correlation Threshold

Removes highly correlated features (which provide redundant information).

Pros: Can improve model performance (speed, accuracy, robustness).

Cons: Requires manual threshold setting; setting it too low may discard useful information.

4.3 Genetic Algorithms

Inspired by evolution, used for supervised feature selection.

Pros: Effective for high-dimensional data when exhaustive search is infeasible; suitable when original features must be retained.

Cons: Complex to implement; often unnecessary; PCA or algorithms with built-in feature selection are more efficient.

4.4 Stepwise Search (Honorable Mention)

Selects features via forward or backward search. Not recommended as it's a greedy algorithm and often underperforms compared to regularization-based methods.

5. Feature Extraction

Feature Extraction constructs a new, smaller set of features that retains most of the useful information.

5.1 Principal Component Analysis (PCA)

PCA constructs orthogonal linear combinations of original features, ordering principal components by explained variance.

Pros: Versatile, effective, quick to deploy; has many variants.

Cons: New features are not interpretable; requires manual variance threshold setting.

5.2 Linear Discriminant Analysis (LDA)

LDA is a supervised method that constructs linear combinations to maximize separation between classes.

Pros: Supervised nature may improve performance; variants are available.

Cons: New features are not interpretable; requires manual setting of the number of features; requires labeled data.

5.3 Autoencoders

Autoencoders are neural networks that learn a compressed representation of data.

Pros: Perform well on data like images and speech.

Cons: Require large amounts of training data; not a general-purpose dimensionality reduction algorithm.

Summary & Recommendations

- Practice Diligently: Mastering algorithms requires constant practice on datasets.

- Build a Strong Foundation: Understanding the core algorithms discussed here is the foundation for using more complex variants.

- Data is Paramount: Good data exploration, cleaning, and feature engineering often improve results more than choosing a complex algorithm.