Deep Learning is a subfield of machine learning that uses neural networks with many processing layers (hence "deep") to automatically learn hierarchical feature representations from data. Since its rise around 2006, it has become a core technology driving progress in artificial intelligence (AI), achieving breakthroughs in computer vision, natural language processing, speech recognition, and more.

What is Deep Learning?

Technically, deep learning is a type of representation learning, which itself is a method within machine learning. Machine learning is one approach to achieving artificial intelligence. Thus, deep learning, machine learning, and AI form a nested hierarchy.

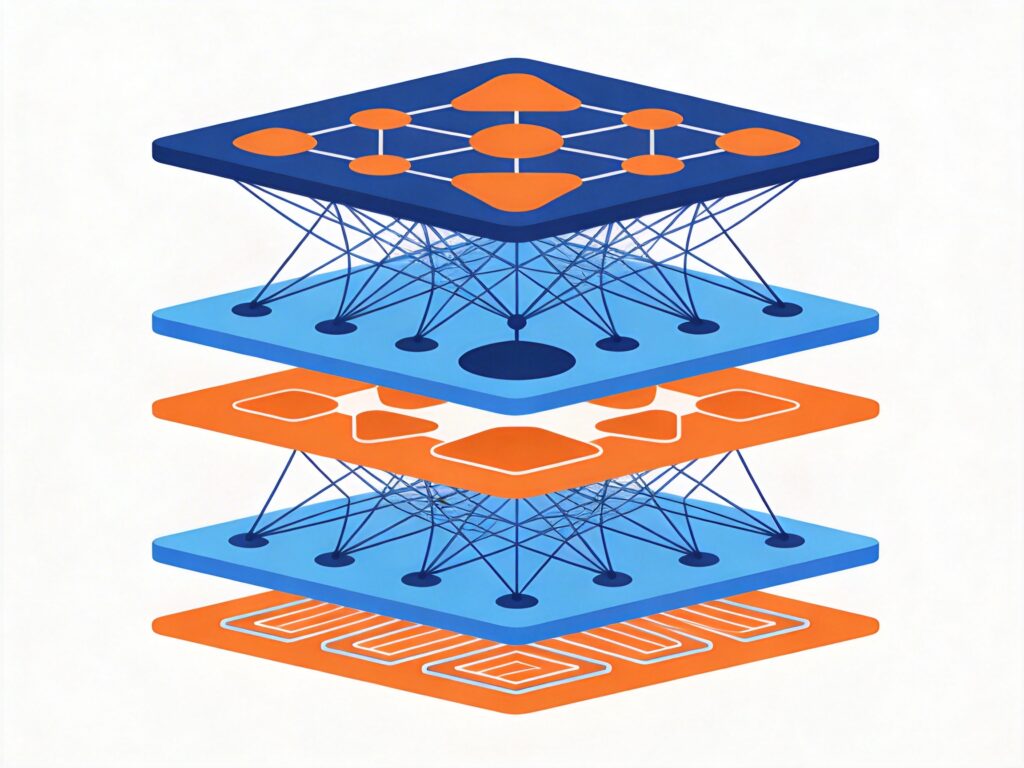

The core of a deep learning model is its "deep" architecture. Through multiple layers of nonlinear transformations, the model extracts and combines features from raw input data step by step. Shallow layers typically learn simple features like edges and colors, while deeper layers combine these into more abstract, high-level concepts (like object parts or shapes). This ability to learn features automatically distinguishes it from traditional machine learning methods that require manual feature engineering.

The term "depth" can be understood in two main ways: the depth of the computational graph (the length of the path from input to output) and the depth of the conceptual hierarchy (the level of feature abstraction the model represents). Both perspectives highlight deep learning's power to approximate complex functions through multi-layer nonlinear transformations.

Common Models

Several classic neural network architectures fall under the deep learning umbrella.

Multilayer Perceptron (MLP)

The MLP is the most basic deep feedforward neural network, consisting of an input layer, multiple hidden layers, and an output layer, with full connections between layers. It introduces nonlinearity via activation functions, enabling it to solve problems like XOR that a single perceptron cannot. Training typically uses the backpropagation algorithm with gradient descent.

Convolutional Neural Network (CNN)

CNNs are designed for grid-like data (e.g., images). Their core components are convolutional layers and pooling layers. Convolution uses weight sharing to drastically reduce parameters, while pooling provides spatial invariance. From LeNet to ResNet, CNN architectures have grown deeper and more optimized, becoming foundational in computer vision.

Recurrent Neural Network (RNN) & Long Short-Term Memory (LSTM)

RNNs are designed for sequential data. Their hidden state carries historical information, modeling dependencies in sequences. LSTM is a key RNN variant that uses gating mechanisms (input, forget, output gates) and a cell state to effectively mitigate the vanishing/exploding gradient problem, enabling learning of long-range dependencies.

Deep Autoencoder

An autoencoder is an unsupervised model that learns an efficient encoding (representation) of input data. Its goal is to reconstruct the input via an encoder and a decoder, forcing the intermediate encoding to capture key data features. Stacking multiple hidden layers creates a deep autoencoder, useful for dimensionality reduction, denoising, and feature extraction.

Optimization Methods

Training deep networks requires efficient optimizers to minimize the loss function.

- Stochastic Gradient Descent (SGD): Updates parameters using the gradient from one or a small batch of samples. Simple but can be slow to converge and oscillate.

- Adaptive Optimizers: Adjust the learning rate per parameter.

- Adagrad: Good for sparse features but learning rate can decay too quickly.

- RMSprop / Adadelta: Use a moving average of squared gradients to address Adagrad's aggressive decay.

- Adam: Combines ideas from momentum and RMSprop. Often converges quickly and stably, making it one of the most popular optimizers today.

Key Techniques

Common techniques to prevent overfitting, speed up training, and improve stability include:

- Activation Functions: Functions like ReLU, Sigmoid, and Tanh introduce nonlinearity. ReLU and its variants (e.g., Leaky ReLU) are widely used for helping mitigate the vanishing gradient problem.

- Dropout: Randomly "drops" (deactivates) neurons during training, forcing the network to learn more robust features and acting as a regularizer.

- Batch Normalization: Normalizes the inputs to a layer, stabilizing the distribution. This allows for higher learning rates, faster training, and reduced sensitivity to initialization.

- Early Stopping: Halts training when validation performance stops improving to prevent overfitting.

- Regularization: Adds an L1 or L2 penalty on weights to the loss function to constrain model complexity and improve generalization.

Frameworks & Platforms

Choosing a framework greatly improves development efficiency. Popular choices include:

- TensorFlow & PyTorch: The two most popular frameworks. TensorFlow has a vast ecosystem and mature production deployment. PyTorch's dynamic computation graph is more flexible and favored by researchers.

- Keras: A high-level API, now integrated into TensorFlow, known for its user-friendly interface and rapid prototyping.

- Other Frameworks: Caffe (vision-focused, largely superseded), MXNet (efficient, multi-language), CNTK (Microsoft, strong for speech), Deeplearning4j (Java-based, integrates with big data stacks).

Classic CNN Architectures

Landmark CNN architectures have driven computer vision forward:

- LeNet-5: Pioneered by Yann LeCun for digit recognition, establishing the basic CNN pattern.

- AlexNet: Won the 2012 ImageNet competition, proving the power of deep CNNs and popularizing ReLU and Dropout.

- VGGNet: Used repeated blocks of small (3x3) convolutions and pooling to build uniform, deep (16-19 layer) networks.

- GoogLeNet: Introduced the Inception module for multi-scale feature extraction within a layer, improving performance while controlling parameters.

- ResNet: Solved the degradation problem in very deep networks via residual (skip) connections, enabling the training of networks with hundreds or thousands of layers.

Applications

Deep learning is now pervasive across AI applications:

- Computer Vision: Image classification, object detection, semantic segmentation, image generation.

- Speech Recognition: Converting audio to text, core to virtual assistants.

- Natural Language Processing (NLP): Machine translation, text classification, sentiment analysis, question answering.

- Recommendation Systems: Personalized content, product, and ad recommendations based on user behavior.

Advanced Paradigms

Advanced learning paradigms extend deep learning's capabilities:

- Transfer Learning: Leverages knowledge from a model trained on a source task for a different target task, especially useful when target data is scarce.

- Reinforcement Learning: An agent learns an optimal policy through trial-and-error interaction with an environment. Deep Reinforcement Learning (e.g., DQN, AlphaGo) uses deep neural networks as function approximators, achieving success in games and robotics.

- Generative Adversarial Networks (GANs): Use a generator and a discriminator in an adversarial game to learn to generate realistic data (images, audio, text), widely used for content creation and data augmentation.

Learning Resources

Online Courses

- Stanford CS231n: Convolutional Neural Networks for Visual Recognition

- Stanford CS224n: Natural Language Processing with Deep Learning

- Deep Learning Specialization (Coursera, Andrew Ng)

- Fast.ai: Practical Deep Learning for Coders

Books & Documentation

- Deep Learning (Ian Goodfellow, Yoshua Bengio, Aaron Courville) – The "Deep Learning Bible"

- Neural Networks and Deep Learning (Michael Nielsen) – Free online book

- Official documentation and tutorials for TensorFlow and PyTorch

Community & Conferences

- Conferences: NeurIPS, ICML, ICLR, CVPR, ACL

- Platforms: arXiv, Papers with Code, GitHub

The field evolves rapidly. The best way to learn is through hands-on practice, contributing to open-source projects, and staying updated with research.