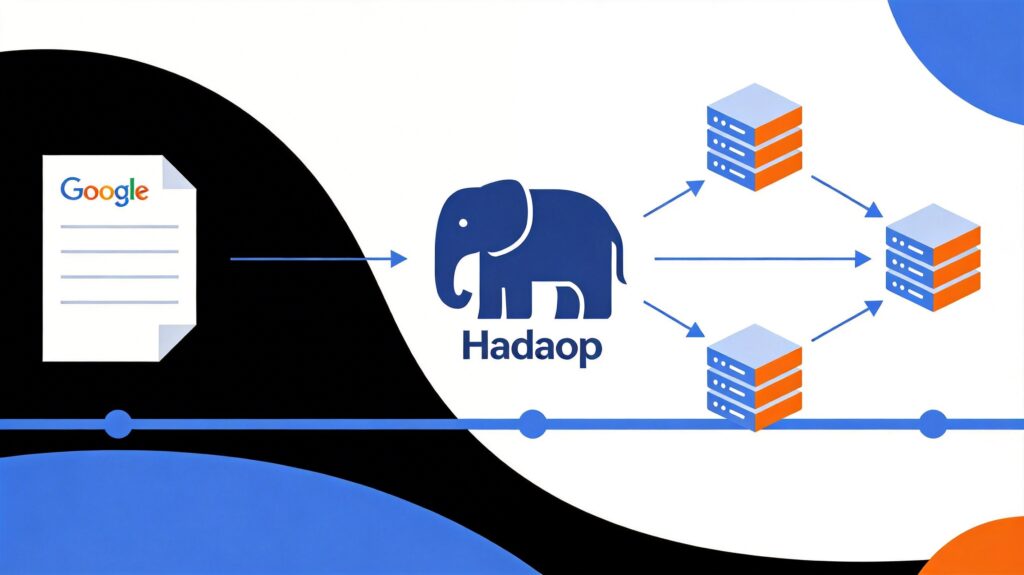

Origins: From Google's Paper to Hadoop

The history of big data processing began with Google's seminal 2004 paper, "MapReduce: Simplified Data Processing on Large Clusters." Faced with explosive growth in web pages, Google needed to process massive raw data (like web crawler data and request logs) to compute derived data (like inverted indexes and web graph structures). While the computation logic was simple, the sheer data volume exceeded single-machine capabilities.

To manage the complexities of distributed parallel computing—task distribution, data shuffling, and fault tolerance—Google designed an abstraction model inspired by the map and reduce functions from functional programming languages like Lisp. The model's key contribution was not the concepts themselves, but its successful implementation on clusters of commodity PCs, enabling horizontal scalability and fault tolerance, marking the shift from centralized to distributed computing.

Google's internal "three treasures" were MapReduce, GFS (Google File System), and BigTable. As these were not open-source, the community created Hadoop, with its MapReduce and HDFS (Hadoop Distributed File System) being open-source implementations of Google's MapReduce and GFS (BigTable's counterpart is HBase). This launched the era of open-source big data frameworks.

Core Concepts of Big Data

1. What is Big Data?

The term "big data" has two common meanings:

- Large Datasets: Data volumes so large they cannot be effectively captured, managed, or processed by traditional databases or single-machine tools.

- Processing Technologies: The technology stack used to handle such massive datasets, including data ingestion, storage, computation, analysis, and visualization.

2. The 3V Model

Gartner analyst Doug Laney's "3V" model outlines key characteristics:

- Volume: Massive scale, often petabytes or exabytes, requiring distributed clusters.

- Velocity: High speed of data generation and flow, often demanding near-real-time or real-time processing.

- Variety: Diverse data sources and formats, including logs, social media, and sensor data, blending structured and unstructured data.

3. Big Data Processing Pipeline

A typical pipeline includes:

- Data Ingestion: Bringing data from source systems into the platform.

- Data Storage: Persisting data in distributed storage (e.g., HDFS).

- Computation & Analysis: Processing and analyzing data using computational frameworks.

- Result Presentation: Displaying results via reports, dashboards, or visualizations.

4. Defining a Big Data Processing Framework

A big data processing framework is a software framework or engine responsible for computing over big data. It reads data from storage or message queues and executes computational logic to extract information. Terms like "big data computing framework" are often synonymous. Sometimes "processing engine" refers specifically to the core computational component (e.g., MapReduce in Hadoop), while "framework" encompasses a broader set of components.

Classification of Data Processing Frameworks

Based on data nature and latency requirements, frameworks are primarily categorized into three types:

- Batch Processing Systems: Process finite, static datasets, outputting results after job completion (e.g., Apache Hadoop).

- Stream Processing Systems: Process continuous, unbounded data streams with low latency (e.g., Apache Storm, Apache Samza).

- Hybrid Processing Systems: Support both batch and stream processing with a unified programming model (e.g., Apache Spark, Apache Flink).

Batch Processing Systems

Designed for large-scale, static, persisted data. Datasets are finite (e.g., historical logs), with results output after processing. Jobs are long-running, suitable for latency-insensitive scenarios like data warehousing and offline reporting.

Apache Hadoop

The foundational open-source big data project. Core components:

- HDFS: Distributed file system for high-throughput, fault-tolerant storage.

- YARN: Resource management and job scheduling system.

- MapReduce: Disk-based batch processing engine.

While MapReduce's complexity and disk I/O for iterative computations have reduced its direct application, HDFS and YARN remain foundational for many ecosystem tools. Hadoop remains valuable for learning distributed computing principles.

Stream Processing Systems

These systems process continuous, unbounded data streams in real-time, like a never-ending "data processing pipeline." They can be:

- Record-at-a-time: True per-record processing with minimal latency.

- Micro-batch: Processing small batches of data within short time windows.

Use cases include real-time monitoring, fraud detection, and real-time recommendations.

Apache Storm

A low-latency distributed stream processing framework. Core concepts:

- Topology: A directed acyclic graph of Spouts and Bolts defining the processing logic.

- Spout: Data source that reads from external systems and injects streams.

- Bolt: Processing unit for operations like filtering, aggregation, and computation.

Storm provides "at-least-once" semantics by default. Its Trident extension offers "exactly-once" semantics but uses a micro-batch model, impacting performance.

Apache Samza

Deeply integrated with Apache Kafka. Its architecture has three layers:

- Streaming Layer: Kafka provides durable, replayable message streams.

- Execution Layer: YARN handles resource management and isolation.

- Processing Layer: Samza API for writing stream processing logic.

For teams with existing Hadoop and Kafka infrastructure, Samza leverages these resources well and facilitates data collaboration via Kafka Topics.

Hybrid Processing Systems

These systems aim to process both batch and streaming data with a single API or framework, simplifying the tech stack and developer experience.

Apache Spark

Spark significantly improves performance via in-memory computing and an advanced DAG scheduler. Key advantages:

- In-Memory Computing: Uses Resilient Distributed Datasets (RDDs) to cache intermediate results, reducing disk I/O, ideal for iterative algorithms.

- Unified API: Rich set of operators (Transformations and Actions) for concise code.

- Multi-Language Support: Scala, Java, Python, R.

- Ecosystem: Includes Spark SQL (interactive queries), Spark Streaming (stream processing), MLlib (machine learning), GraphX (graph processing).

Spark Streaming uses a micro-batch model, with latency typically in seconds. While its absolute latency is higher than native stream processors like Storm, its unified model and robust ecosystem make it one of the most widely used frameworks.

Apache Flink

Flink adopts the opposite philosophy: it treats batch processing as a special case of stream processing (bounded streams). This is a "stream-first" Kappa architecture. Core concepts:

- DataStream API: For unbounded data streams, supporting true record-at-a-time processing with very low latency.

- DataSet API: For batch processing, essentially processing bounded streams.

- Operator: Transforms and computes on data streams.

Flink also provides Table API (SQL-like), CEP (complex event processing), graph processing, and machine learning libraries. It excels in performance benchmarks, especially for stream processing latency and throughput, though its community and large-scale production deployments are currently fewer than Spark's.

How to Choose a Big Data Processing Framework?

For Learners

- Hadoop: Understand the fundamentals of distributed computing and storage. A recommended starting point, especially HDFS and YARN.

- Spark: The current mainstream choice for enterprise applications, with a vibrant ecosystem and community. The top skill for job seekers and practical projects.

- Flink: Represents the "next generation" of stream processing with high growth potential. Suitable for learners focused on cutting-edge technology.

For Enterprise Applications

- Pure Batch, Cost-Sensitive: Consider Hadoop MapReduce.

- Pure Stream, Ultra-Low Latency: Storm or Flink are better choices.

- Existing Kafka + Hadoop Ecosystem: Samza integrates well.

- Hybrid Batch/Stream, Unified Stack: Spark is a mature, stable choice. If extreme stream processing performance is required and you are open to newer technology, evaluate Flink.

Recommended Learning Resources

The best starting point is always the official documentation. The following books are also useful references:

Hadoop Related

- "Hadoop: The Definitive Guide" (the "Elephant Book"): Comprehensive coverage of Hadoop core and ecosystem.

- "YARN: The Definitive Guide": In-depth exploration of the resource management framework.

Spark Related

- "Learning Spark: Lightning-Fast Data Analytics": Classic introductory book covering core concepts.

- "Advanced Analytics with Spark": For those with a foundation, focusing on data analysis and machine learning applications.

Note: Technology evolves rapidly, and books may become outdated. Always supplement with the latest official documentation. For newer frameworks like Samza and Flink, high-quality books in English are limited, making official docs and community resources essential.