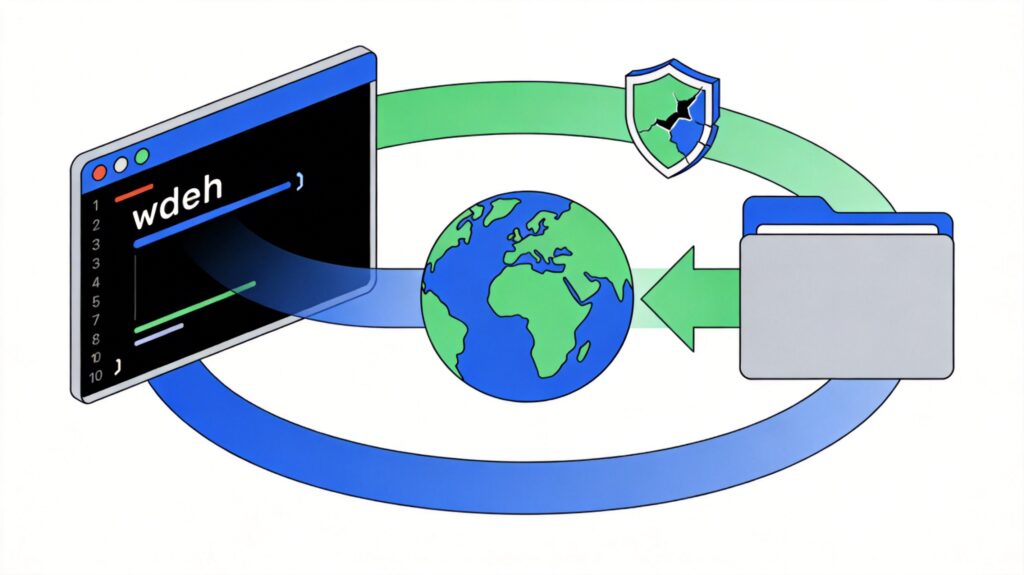

wget Command Basics and Bypassing Hotlink Protection

wget is a powerful command-line download tool supporting HTTP, HTTPS, and FTP protocols. It is commonly used for batch file downloads, website mirroring, and handling download scenarios requiring specific request headers.

Core Parameters

- -r: Recursive download. For HTTP/HTTPS, downloads the specified page and linked resources (depth adjustable); for FTP, downloads all files in the specified directory.

- -m: Mirror mode. Equivalent to using

-rand-Ntogether, ideal for creating website mirrors. - -k: Convert links. After downloading, converts absolute links in HTML files to relative links for local browsing.

- -N: Timestamp checking. Downloads only files newer than local copies (based on file size and modification time).

- -p: Download all resources required by the page (e.g., images, CSS, JS).

- -np: No parent. Recurses only within the specified directory and its subdirectories.

- -nd: No directories. All files are downloaded to the current directory.

- --accept: Specify accepted file extensions (e.g.,

--accept=iso,jpg). - --reject: Specify rejected file extensions.

- -H: Span hosts. Used when website resources (e.g., images) are hosted on other domains.

Practical Examples

Example 1: Bypassing Referer Hotlink Protection

When a target website checks the HTTP Referer header for hotlink protection, use the --referer parameter to forge the referrer and download protected files.

wget -E --referer http://example.com/ -r -m -k http://img.example.com/2015/This command will:

- Set the Referer header to

http://example.com/. - Recursively download all files under

http://img.example.com/2015/. - Enable mirror mode (

-m) and link conversion (-k).

Note: Referer-based protection is basic. Modern sites often use more secure methods like token verification, signatures, or cookie checks. For such advanced protection, wget parameters alone may be insufficient; analyzing authentication logic or using specialized tools may be required.

Example 2: Downloading an Entire Site Without Protection

For unprotected websites, you can recursively download all pages and resources directly.

wget -r -p -np -k http://xxx.com/xxx/This command will:

- Recursively download all content under the specified path.

- Download all resources needed by pages (

-p). - Not ascend to parent directories (

-np). - Convert links to local relative paths (

-k).

Example 3: Downloading Files from a Specific Directory

If you only need files from a specific directory and don't want complex local directory structures, combine -np and -nd.

wget -r -np -nd http://example.com/packages/This command will:

- Recursively download all files in the

packagesdirectory. - Not ascend to parent directories.

- Save all files directly to the current directory without creating subfolders.

To filter by file type, add --accept or --reject:

wget -r -np -nd --accept=iso http://example.com/centos-5/i386/This command downloads only files with the .iso extension.

Example 4: Mirroring an Entire Website (Including Cross-Domain Resources)

To fully mirror a website, including resources hosted on other domains (e.g., images, stylesheets), use:

wget -m -k -H http://www.example.com/This command will:

- Enable mirror mode (

-m). - Convert links (

-k). - Allow cross-host downloads (

-H), ensuring external resources are fetched.

Important Notes and Best Practices

- Comply with Laws and robots.txt: Ensure you have permission before downloading any website content, and respect the site's

robots.txtrules and relevant laws. Unauthorized scraping may constitute infringement. - Control Request Rate: Add

--limit-rate=200kto limit download speed, or use--wait=2to set intervals between requests, avoiding excessive load on the target server. - Handle Authentication: If a site requires login, use

--userand--passwordparameters (note password security), or add cookies via--header. - Updates and Maintenance: wget command parameters may change with version updates. Check

wget --helpor the official documentation for the latest information.